A Million Token Context Window Isn't What You Think It Is

Updated

TL;DR

Anthropic recently announced that "1 Million token context window" is now generally available to all users for Claude Opus 4.6 and Sonnet 4.6 models. It's amazing that in a couple of years, we went from GPT-3 with a 4K token context window (feels ancient), to Google becoming the first AI company to introduce a 1M token context window AI model, and Meta taking it as far as introducing a 10M token context window model "Llama 4 Scout".

Now all of this looks good on paper, but is it actually good or are the AI companies cashing in on the idea of a larger context window? There's a catch, let me explain.

Table of Contents

What Is The Context Window?

A context window is the maximum amount of input (that includes responses) an AI model can "see" at once, both what I send it (the input) and what it generates (the output). It's measured in tokens (chunks of input/output), where the token length varies per AI model implementation.

Think of it like a desk. Everything I want the model to work with (my ask, the documents I paste in, the conversation history) has to fit on that desk. If something doesn't fit, it gets left out entirely. The model has zero knowledge of anything outside its context window.

When I send a prompt, the model reads the entire context window at once. It processes all tokens simultaneously using an attention mechanism. This mechanism decides which parts of the input are the most relevant to generating each word of the output.

Every new message in a conversation gets appended to the existing context. Once the total (input + output) exceeds the window limit, the model forgets the oldest messages (however, modern AI agents do automatic "compaction", which preserves just the summary of the conversation to try to fit it in). This is why long conversations can feel like the model "lost track" of something you said earlier.

The Actual Good

A larger context window has a lot of great use cases, such as:

- Feeding an entire codebase in one go so the model understands how everything connects.

- Analyzing long documents (legal contracts, research papers, books) without processing or splitting them into chunks.

- Maintaining long conversations where earlier context matters (e.g. a back-and-forth code debugging session).

- Reducing the need for complex retrieval pipelines (RAG). Instead of searching a vector database for relevant snippets, you just paste it in.

The Catch

The bigger the context, the higher the risk of the "lost in the middle" problem. The "lost in the middle" problem is where models pay strong attention to the beginning and end of the long context but degrade significantly at recalling information placed in the middle.

This was documented by Liu et al. in their 2023 research paper "Lost in the Middle: How Language Models Use Long Contexts". They tested models on multi-document question answering and key-value retrieval, placing the relevant information at different positions within the input. They found that the performance was highest when the answer was at the very beginning or very end, and dropped sharply when it was in the middle, even for models explicitly designed for long contexts.

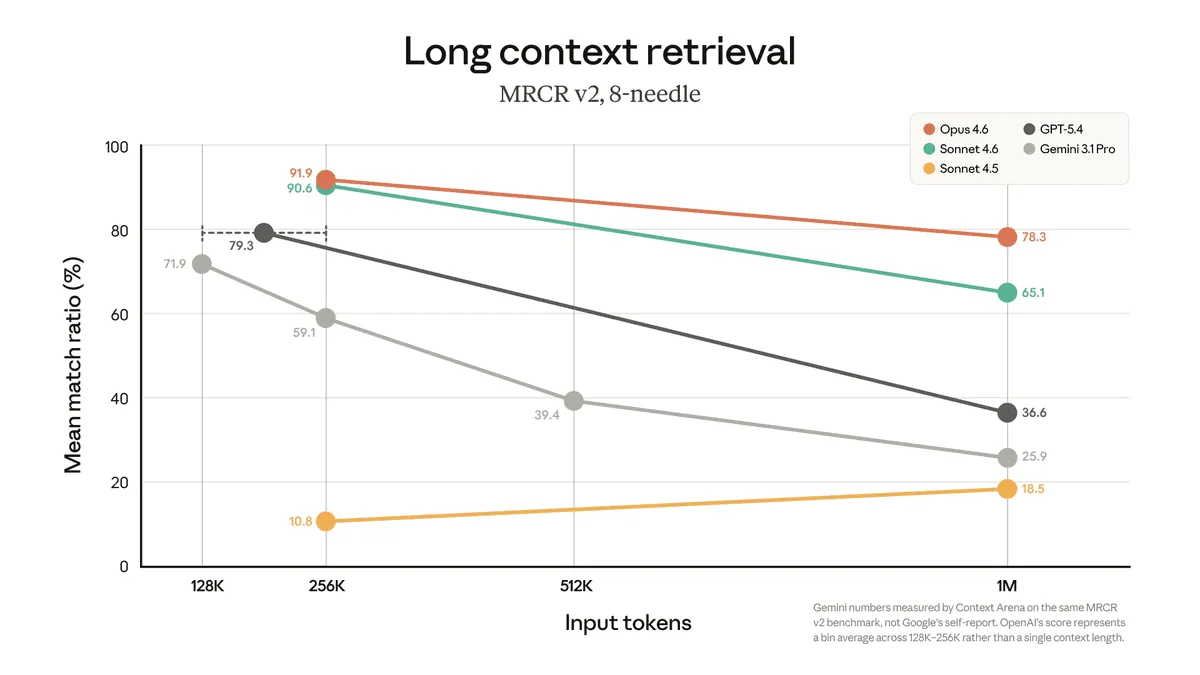

The chart above shows long context retrieval performance across models using the MRCR v2 benchmark with 8 needles (kind of finding needles in a haystack), which is a more demanding multi-needle retrieval test.

- Even the best model (Opus 4.6) drops from ~92% mean match ratio at 256K to ~78% at 1M tokens.

- GPT-5.4 falls from ~80% at 128K to ~37% at 1M (massive degradation).

- Gemini 3.1 Pro goes from ~59% at 256K down to ~26% at 1M.

- Sonnet 4.5 barely crosses ~19% at 1M.

But why? It's fundamental to how AI models work and how they are designed.

The architecture behind every major LLM uses something called self-attention (Vaswani et al., "Attention Is All You Need," 2017). Think of it this way, when the model is generating the next word, it looks back at the entire input and asks "which parts of this input matter the most for what I'm about to generate?" It assigns a relevance score to every token in the input and uses those scores to decide what to focus on.

The catch is that these scores have to add up to 100%. With a short input (for example, 4K tokens), it's easy to give meaningful attention to the important parts. But with 1M tokens, that same 100% gets spread across a million candidates. The important stuff (if it's in the middle) now has to compete with a massive amount of surrounding text for the model's focus, and it often loses.

It's like being in a quiet room vs. a stadium. In the quiet room, I can hear someone whisper from across the table. In the stadium, that same whisper gets drowned out by the crowd noise, even though the person is saying something important.

The more you use the context window, the worse outcomes you'll get. This leads to an academic concept called the "dumb zone". Around the 40% line is where you're going to start to see some diminishing returns depending on your task. - Dex Horthy, HumanLayer

Also, AI models need to know the order of tokens, i.e., which word comes first, which comes last. This is done through positional encodings, which are like invisible tags that say "this token is at position 1," "this token is at position 500,000," and so on. Popular methods like RoPE (Rotary Position Embedding, Su et al., 2021) encode how far apart two tokens are. This creates a recency bias and the model naturally pays more attention to tokens that are closer to the end of the input (near where it's generating) and less attention to tokens that are far away.

Peysakhovich and Lerer showed this directly in "Attention Sorting Combats Recency Bias In Long Context Language Models" (2023). Even when a relevant document is placed early in the context, the model pays less attention to it, not because it doesn't recognize it as relevant, but because its position makes it inherently less "visible" to the attention mechanism. The model has a built-in preference for recent text, learned during training.

All of these factors affect how AI models respond when given a long context window.

Conclusion

Larger context windows are a genuine advancement. Being able to feed an entire codebase or a bunch of novels into a single prompt is incredibly useful. But a model accepting 1M tokens is not the same as a model using 1M tokens well.

Don't blindly dump everything into the context window just because you can. For tasks that require finding specific details in large documents or documents which change frequently, a well-designed RAG pipeline will often outperform raw long context.

Context windows will keep getting better as architectures improve, but for now, understanding the trade-offs is what matters.